Newton's method

Newton's method, also called the Newton-Raphson method, is a numerical root-finding algorithm: a method for finding where a function obtains the value zero, or in other words, solving the equation . Most root-finding algorithms used in practice are variations of Newton's method.

Description of the algorithm

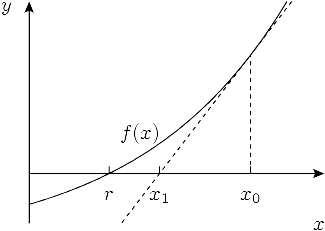

Suppose the function has a root at . The idea behind Newton's method is that, if is a smooth function, its graph can be approximated around a point by its tangent at . If the approximation is good enough, the point where the tangent crosses the -axis must lie close to . This suggests that if we choose the point to lie somewhere close to , the point where the tangent crosses the -axis — we may call that point — will lie even closer to . The idea is illustrated in the following diagram:

Now suppose that really does lie closer to than . Then we can determine the tangent of at to obtain a second point that lies closer still. More generally, given , we can obtain a better approximation .

Newton's method hinges on the fact that we can calculate the root of a tangent line directly from its straight-line equation , and that we can calculate and in terms of the function value and the function's derivative at the same point, . Put together, the relation between and can be expressed as

This formula defines Newton's method. Using it to obtain an improved approximation is called to perform a Newton step, Newton update or Newton iteration. Newton's method consists of repeatedly using this formula to obtain a sequence of successively better estimates for .

Let us illustrate Newton's method with a concrete numerical example. The golden ratio (φ ≈ 1.618) is the largest root of the polynomial ; to calculate this root, we can use the Newton iteration

with the initial estimate . Using double-precision floating-point arithmetic, which amounts to a precision of roughly 16 decimal digits, Newton's method produces the following sequence of approximations:

- x0 = 1.0

- x1 = 2.0

- x2 = 1.6666666666666667

- x3 = 1.6190476190476191

- x4 = 1.6180344478216817

- x5 = 1.618033988749989

- x6 = 1.6180339887498947

- x7 = 1.6180339887498949

- x8 = 1.6180339887498949

Since the last iteration does not change the value, we can be reasonably sure to have obtained a value for the golden ratio that is correct to the precision used.

Convergence analysis

In the description above, we relied on geometrical intuition to argue that Newton's method ought to produce a sequence of points that converge to the root : that is, using the language of limits, we should be able to expect that

We will show that this indeed is the case, under suitable conditions. In fact, we will be able to give a formula for how quickly the 's approach .

There is an important catch, however. In the geometrical description, we made the assumption that closely resembles a straight line at least locally (close to the root), and this assumption is in fact essential to the functioning of Newton's method. Most functions do not globally resemble lines: in general, function graphs contain curvature, stationary points, inflection points, and even discontinuities. Does Newton's method work in these cases?

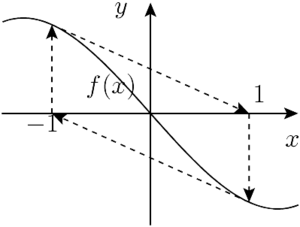

The answer is maybe. If is ill-behaved or lies very far from , Newton's method may produce a sequence of numbers that jump back and forth erratically or even gradually move away from the root. If we are lucky, a value may eventually end up close to a root by chance. But if we are unlucky, Newton's method will fail to converge at all. For example, if Newton's method is used to solve the equation with the initial guess , it will produce the endlessly repeating sequence , as shown in the figure below:

It may also happen that Newton's method converges to a root, but the "wrong" one. For example, if we attempt to calculate as the smallest positive solution to , we must choose an initial value close to 3.14. Newton's method started near 0 would converge to 0; if started near 6.28, it would converge to , and so on. If we start with close to , where the sine curve is horizontal, may end up close to for some enormous .

But let us assume that the root and the initial guess both lie in a region where is well-behaved. In particular, we assume that is a repeatedly differentiable function in this region. Expanding in a Taylor series around gives

for some . Assuming , dividing through gives

Moving terms to the left hand side and substituting the Newton update expression for then gives

If the second derivative is bounded and the first derivative does not approach 0, the error at step is hence roughly proportional to the square of the error at step . Under these conditions, if for any we have that and is small enough for , subsequent iterations will reduce the error least quadratically (or at a second-order rate).

In other words, if the error at step is , the error at the next step is roughly : each step roughly doubles the number of correct digits. This fast rate of convergence is characteristic for Newton's method. Looking back at the example of calculating the golden ratio, we can verify that the rate of convergence is quadratic in practice: the number of correct digits after the decimal point is 0, 0, 1, 2, 5, 12, and thereafter the convergence is limited by the finite precision which we used to carry out the arithmetic.

The second-order or quadratic convergence depends on being small. There are two ways in which it may not be. The first possibility is that the second derivative in the numerator is large, which simply means that the function graph has a large amount of curvature and hence is poorly approximated by its tangent — we have already illustrated with examples how this may cause trouble.

The second possibility is that the first derivative in the denominator is very small; that is, if the tangent is nearly horizontal. In particular, the derivative becomes arbitrarily small if . This is an important case: it corresponds to having a multiple root at . Newton's method can be proved to still converge if the root is a multiple root, but the rate of convergence in that case is merely linear: each iteration roughly increments, rather than multiplies, the number of correct digits by a fixed amount.

Applications

So far we have only considered solving the equation in the real numbers, but Newton's method can be applied or generalized to various more complicated problems. To begin with, it can of course be used to solve the more general equation by rewriting it as . It can also be used to calculate inverse functions. If and are inverse functions of each other, calculating amounts to finding an that solves the equation

In many cases, Newton's method is a good choice for solving this equation. For example, the well known and very efficient Babylonian method for calculating square roots is equivalent to using Newton's method to solve the equation for ( being the inverse function of ).

Newton's method also works in the complex numbers; for example, it can be used to find complex roots of polynomials. Its behavior for complex analytic functions is similar to that for differentiable real functions; in particular, the rate of convergence is quadratic sufficiently close to a root. In the case of a polynomial with real coefficients, or any functions that only assumes real values for real input, the initial value must however be complex, or else the values generated by Newton's method never leave the real line. When Newton's method is applied to a polynomial with complex roots, the region of convergence around each root has a complicated boundary, which defines a fractal called a Newton fractal.

Newton's method can also be used as an optimization algorithm, to determine where a function obtains a local minimum or maximum. This amounts to solving the equation , which gives the Newton iteration

Variations and hybrid methods

The fact that Newton's method may converge slowly or fail to converge for functions with horizontal tangents, or when given poor initial values, makes it unsuitable in raw form as a general-purpose root-finding algorithm. It is therefore typically combined with some mechanism for detecting and correcting convergence failure.

One way to detect failure is count the number of iterations and break if the number exceeds some specified limit. Another technique is bracketing: the root is supplied along with known bounds for its location. Failure is signaled if the Newton iteration produces a value that lies outside the bounds.

In case of failure, it may be possible to recover by switching to a slower but safer algorithm, such as the bisection algorithm. After taking a few steps with the safe method, one can switch back to Newton's method and try again. This is called a hybrid method. Another option is the damped Newton's method, in which the derivative is multiplied by a damping factor , with .

Newton's method requires that the derivative of the object function be known, but in some situations the derivative or Jacobian may be unavailable or prohibitively expensive to calculate. The cost can be higher still when Newton's method is used as an optimization algorithm, in which case the second derivative or Hessian is also needed.

An alternative in these situations is to use an approximation of the derivative or second-derivative, which leads to so-called quasi-Newton methods. The most common strategy is to use the function values from two successive iterations to calculate a finite difference approximation for the derivative. This is equivalent to the secant method and reduces the rate of convergence from 2 to 1.618 (more precisely, the golden ratio). An alternative is to compute a value for the derivative or second derivative accurately, but reusing the same value for several successive iterations.

Modifications of Newton's method can also lead to more specialized algorithms, such as the Durand-Kerner method which is used to find simultaneous roots of a polynomial.

Computational complexity

Using Newton's method as described above, the time complexity of calculating a root of a function with -digit precision, provided that a good initial approximation is known, is where is the cost of calculating with -digit precision.

If can be evaluated with variable precision, the algorithm can be improved. Because of the "self-correcting" nature of Newton's method, meaning that it is unaffected by small perturbations once it has reached the stage of quadratic convergence, it is only necessary to use -digit precision at a step where the approximation has -digit accuracy. Hence, the first iteration can be performed with a precision twice as high as the accuracy of , the second iteration with a precision four times as high, and so on. If the precision levels are chosen suitably, only the final iteration requires to be evaluated at full -digit precision. Provided that grows superlinearly, which is the case in practice, the cost of finding a root is therefore only , with a constant factor close to unity.

This property of Newton's method makes it an essential tool for arbitrary-precision arithmetic. Division of large numbers is a good example. Multiplication of large numbers can be done efficiently using the fast Fourier transform, but there is no corresponding fast algorithm for division. However, division is the inverse operation of multiplication, and there is a Newton iteration for finding this inverse that uses only multiplication and subtraction. Therefore, by means of Newton's method, division can be performed nearly as fast as multiplication (in practice, about three times slower). Likewise, the time cost for computing square roots (as the inverse of ) with Newton's method is proportional to that of multiplication.

History

Newton's method was described by Isaac Newton in De analysi per aequationes numero terminorum infinitas (written in 1669, published in 1711 by William Jones) and in De metodis fluxionum et serierum infinitarum (written in 1671, translated and published as Method of Fluxions in 1736 by John Colson). However, his description differs substantially from the modern description given above: Newton applies the method only to polynomials. He does not compute the successive approximations xk, but computes a sequence of polynomials and only at the end, he arrives at an approximation for the root r. Finally, Newton views the method as purely algebraic and fails to notice the connection with calculus. Isaac Newton probably derived his method from a similar but less precise method by François Viète. The essence of Viète's method can be found in the work of Sharaf al-Din al-Tusi.

Newton's method was first published in 1685 in A Treatise of Algebra both Historical and Practical by John Wallis. In 1690, Joseph Raphson published a simplified description in Analysis aequationum universalis. Raphson again viewed Newton's method purely as an algebraic method and restricted its use to polynomials, but he describes the method in terms of the successive approximations xk instead of the more complicated sequence of polynomials used by Newton. Finally, in 1740, Thomas Simpson described Newton's method as an iterative methods for solving general nonlinear equations using fluxional calculus, essentially giving the description above. In the same publication, Simpson also gives the generalization to systems of two equations and notes that Newton's method can be used for solving optimization problems by setting the gradient to zero.

References

- Michael T. Heath (2002), Scientific Computing: An Introductory Survey, Second Edition, McGraw-Hill

- Jonathan M. Borwein & Peter B. Borwein (1987), Pi and the AGM: A Study in Analytic Number Theory and Computational Complexity, Wiley Interscience

- Tjalling J. Ypma (1995), Historical development of the Newton-Raphson method, SIAM Review 37 (4), 531–551. online at JSTOR

![{\displaystyle \xi _{k}\in [r,x_{k}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d5b4541fd20e7a356be47b342c4adf48e89b4100)