Operating system

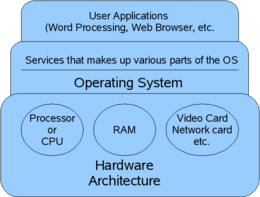

A computer operating system (OS) is a program which runs first after a computer starts up, and which has the purpose of helping programmers and users interact with the computer to carry out their intended tasks. On modern desktop computers, people may think that the operating system is the computer, because the behavior of the operating system program is what users see. The operating system is supposed to hide all the complexities of the underlying hardware from people using the computer. Even gadgets such as cell phones have an operating system, although the people using it may not be aware of its presence or even know its name.

The main part of an operating system is usually called the kernel. The kernel gets assistance from several special programs called drivers (a driver program is an expert on some piece of hardware within the computer, such as the disk drive or the mouse). The kernel also needs assistance from a user interface program.

Phases of Operating

Booting Up

When a computer is first turned on, there is a period during which the machine hardware is testing its own components before being ready to execute software; on IBM compatible PCs, this phase is called POST (Power On Self Test). After POST, the operating system begins to "boot" (load itself into memory). In this phase, the operating system program begins testing hardware components and loading more of its own self into the computer's memory. The term "booting" comes from the aphorism "to pull oneself up by one's own bootstraps," which is what the computer and operating system do when first powered up.

Without delving into the specifics of different architectures, the steps to booting up are usually:

- Execution begins in special firmware or the BIOS (programs in non-volatile memory) or EFI and performs several steps in order:

- consult a database kept in firmware to see what hardware is installed

- run diagnostics on the installed hardware

- locate a disk drive that has a "bootable" partition on it

- load a special program (the bootstrap loader) from the "bootable" partition that will then load the operating system

- transfer control to the now-loaded bootstrap program

- The bootstrap loader begins loading the rest of the operating system kernel into memory

- When enough of the operating system is in memory, and if no critical problems were encountered, this phase ends and the Up-And-Running phase begins

The Booting-Up phase is largely invisible to users, who experience it as a boring wait right after a computer is turned on. The operating system may display a logo and information about hardware that is probed and tested during this phase. If an error occurs because a piece of hardware fails a diagnostic test and the operating system does not load correctly, the information displayed to a user may be limited to a few terse lines of text. On an IBM-compatible PC, if the POST completes successfully, one short beep is issued and control is handed to the operating system; however, if a problem is found (such as a non-working video), the POST causes the computer to emit a different pattern of beeps to provide some indication of what kind of problem was encountered.

Many capabilities of modern operating systems are not apparent to users and programmers at first. Nowadays, we may take features for granted which pioneer computer users completely lacked. The bootstrap loader is one such feature. The earliest computers did not automatically boot themselves. It was necessary for a programmer laboriously to input many individual machine instructions just to make the computer able to run an operating system. The invention of bootstrap loaders was one of the earliest of many important evolutionary steps that eventually made computers easier to use. Modern "bootloaders" can even present the user with a choice of different operating systems to boot in a menu, if the machine has several different operating systems installed on it.

Up-And-Running

In this phase, the operating system software is ready to respond to commands from users and allow user programs to run. The majority of this article describes services offered during the Up-And-Running phase.

Closing-Down

In this phase, the computer ceases to respond to user commands and attempts a graceful, in-order shutdown of any running software and hardware. Programs that are running are asked to close, and may prompt the user to save the documents they have open. Programs that aren't closed after a specified time are forcefully closed. Then the operating system triggers its internal services to gracefully shutdown. Newer computers which conform to the Advanced Configuration & Power Interface (ACPI) standard can even shut the computer off after the operating system is finished shutting down.[1] This "graceful shutdown" is especially important in case of a sudden, unexpected power outage, to save data. If a machine is running on an Uninterruptible Power Supply (UPS), the UPS can be configured to trigger the operating system to perform a graceful shutdown if power is lost for longer than the UPS will last.

Typical Services of an Operating System (in the Up-And-Running Phase)

To modularize the functions performed by an operating system, its major responsibilities are usually further divided into subsystems. These subsystems are covered in the sections that follow.

Kernel

A kernel is the part of the operating system program that is responsible for managing the resources provided by the computer, especially the processor and the memory, for interacting with drivers (programs that are expert about a certain component of the hardware), and for managing the file system and network sockets. Such tasks usually involve the need to protect certain resources from user access, so the kernel is also responsible for managing access rights and user identification.

The kernel runs in a special "privileged mode" where it has unrestricted access to all the hardware of the system it is running on. The exact amount and kind of services provided by the kernel varies from one operating system to another. There are many different kernel designs, but they tend to fall into one of three categories:

- Micro-kernel architectures such as Mach only contain the basic functions within the kernel and run user space sub-systems in a separate address space. The kernel's main function is to coordinate the different sub-systems' requests for hardware and processor time. This design encourages modularity, and is intended to increase the kernel's reliability. For example, if the video card driver crashed in a micro-kernel design, only the video subsystem would be affected. The service could even restart automatically, only affecting the user for a short period of time. In other kernel designs such a low-level driver crashing could possibly take the entire system down with it. While compelling in theory, the design of a micro-kernel has proven to be much more complicated in practice, especially when considering that all the sub-system's accesses to hardware have to be coordinated. Usually this is accomplished with "message passing," where the different subsystems coordinate access to resources by "passing messages" to the kernel. The complexity of this design multiplies exponentially when factors such as multi-threading and multi-processor machines are taken into account, however. Minix is a popular example of a micro-kernel architecture.

- A Monolithic kernel is one in which all the kernel fits into the computer's memory at once. This improves performance. The Linux kernel is a popular example of a monolithic kernel.

Some kernels are tied to one set of drivers or user interface, while some are interchangeable in one or both. The Microsoft Windows and Macintosh OS series have only one user interface per kernel, but can interchange drivers to work with different types of hardware. In comparison, the BSD and Linux kernel has no defined hardware nor user interface, and there are several different drivers and interfaces available for it.

Process management

Memory management

Storage management

The operating system provides a logical view of information storage by abstracting from the intricacies of storage devices and providing the file as the logical storage unit. The operating system then maps these files onto physical media like hard drives.

Drivers

Drivers provide a layer of abstraction that allow an operating system to access hardware. With drivers, the operating system needs to know only how to correctly interface with a certain class of driver, the details of that specific piece of hardware are left to the driver itself. For example, in the past video games that ran on the DOS operating system had to be specifically written for the underlying video card because of this lack of abstraction. This made programming for a wide audience difficult, and gamers that bought one type of video card might not be able to play a new game that had come out simply because the game hadn't been specifically written for their video card. Abstracting the "raw hardware" with drivers was one way that this problem was solved. Now the video game only had to be written to run on the operating system in question; the driver for the specific video card handled "translating" between the video game's requests and the actual commands sent to the hardware itself.

In closed source operating systems drivers are generally written by the manufacturer, which means the hardware manufacturer can decide what operating system or systems their products support. On open source operating systems such as Linux or the BSDs, if the manufacturer of the hardware doesn't publish documentation on the hardware, or better yet provide drivers written for these operating systems, the hardware has to be reverse engineered if it is to be supported.

Drivers are often loaded on bootup to ensure correct operations of all hardware. This means that hardware can only be changed while the computer is off. Plug and play hardware, however, can load the driver into memory as it is plugged in, as long as the driver has already been installed. If drivers are visible from user space as with the kernel module concept in Linux, it is also possible for a user with sufficient access rights to unload one driver and load another.

User interface

A user interface allows for humans to interact with a computer. If a system is not designed to be interacted with directly, the interface may be nonexistent, or perhaps only have a simple interface for debugging. The two major tasks of a user interface are to provide access to core functions, and to organize them into as seamless and intuitive a system as possible.

A command line-driven user interface (CLI), such as MS-DOS and Unix shells, of which there are many, work by parsing and executing text commands. Although a majority of computer users never need to type commands into a console window, programmers and system administrators still use them quite a bit; their low memory requirements make them useful for highly specialized purposes, such as computer repair, accessing a computer over a network or performing a large number of tasks in a sequential order very quickly.

A graphic user interface (or GUI). Graphic user interfaces are usually similar to the window capabilities provided by the Microsoft Windows series, with control panels to handle access system functions, icons, mouse-controlled pointers, context menus on a right click, multiple windows for multiple windows, and some analogue for the Start button. Some interfaces (such as BumpTop), while graphical, use completely different elements to present control structures.

Much rarer are voice-driven interfaces. These interfaces are usually used alongside with a GUI, although some computers are beginning to use them, due to purpose (such as certain GPS navigation systems) or experimentation.

Evolution of the Operating System

Operating systems as they are known today trace their lineage to the first distinctions between hardware and software. The first digital computers of the 1940s had no concept of abstraction; their operators inputted machine code directly to the machines they were working on. As computers evolved in the 1950s and 1960s however, the distinction between hardware such as the CPU and memory (or Core as it was called then) and the software that was written on top of it became apparent.

Batch job systems in the 1960s

- IBM 360 series, and JCL (Job Control Language):

Batch operating systems could only execute one program at a time. The operating system maintained a queue of user programs which had been submitted and were waiting for a chance to execute. Each user program which needed to execute was called a "job". A human "operator" watched over the queue with the ability to move some jobs to the front or back, or kill a job which got hung or ran too long. Some users had higher priorities than others.

Time sharing systems in the 1960s and 1970s

- CTSS / ITS

- Multics / Unix

Unix was the first successful "timesharing" operating system. In a time-sharing system, multiple programs appear to execute at once, but in reality, the fast-working computer processor is alternating quickly among several different programs; at any one instant in time, only one program executes, with all others waiting. To users, the illusion is that they have the processor to themselves, because each program (called a "process" while it runs) seems to run simultaneously with others. In a timesharing operating system, the operating system is responsible for "scheduling" each process (determining when and how long each process gets to run, and making sure that every process regularly gets some time in which to run).

The dawn of the Personal Computer in the 1970s and 1980s

The big three:

- Tandy/RadioShack TRS-80

- Commodore PET

- Apple II

In the late 1970s and early 1980s the concept of someone owning a computer and having it sit on a desk at their house (or place of business) became feasible. A common example of one of the first "personal computers" was the Altair. Computers in that generation, lacking a keyboard or even a standard display, were more of a hobbyists' toy then actual PCs that we know today. The Homebrew Computer Club was one example of the computer clubs that sprang up in this era.

Computers as we know them today evolved from these hobbyist machines into something that was more accessible to consumers. The "big three" manufacturers that ignited this era included Tandy/Radio Shack, Apple Computer and Commodore. Conspicuously absent was IBM, who would not enter the PC market until the early 1980s.

As hardware evolved and desktop computers became common, so too did the operating systems that ran on them.

Graphical User Interface (GUI) operating systems in the 1980s and 1990s

- Xerox PARC's Alto (the inspiration for the GUI)

- Apple Macintosh

- Microsoft Windows

Operating systems today (2007)

Except for small embedded computers, most operating systems today perform timesharing similar to the strategy used for the original Unix operating system. But in fact, modern operating systems do it on two levels:

- the processor is shared among each process (running program)

- the processor may also be shared among multiple threads within one process, allowing a single program to appear to do several things simultaneously

Examples of popular operating systems

For computers:

For smartphones:

Bibliography

- Tanenbaum, Andrew S; Woodhull, Albert S. Operating Systems: Design and Implementation. Prentice Hall, 2006.

References

- ↑ "ACPI Overview" (Retrieved 2007-04-27).